By Vilas Madan Sr Vice President – Growth Leader at EXL. Where he works with enterprises operating in complex environments across APAC to operationalise data, analytics, and AI at scale.

What enterprises cannot see in AI adoption may matter more than what they can.

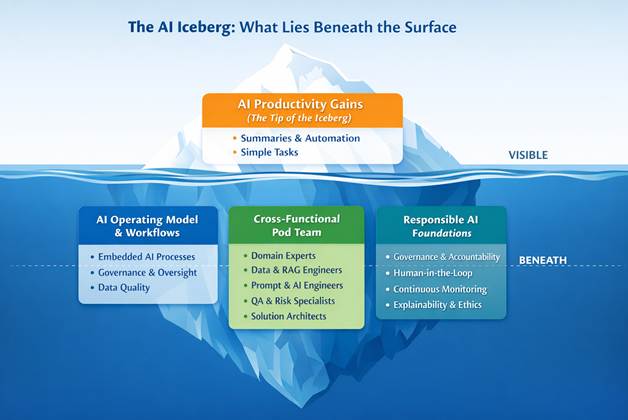

Recent reporting by The Guardian on AI-generated health misinformation exposed a hard truth about enterprise AI adoption. The most visible gains, in terms of faster workflows and problem solving, and lower costs, are only the tip of the iceberg. Beneath that surface lies a far more complex challenge, namely one of trust. And in industries like healthcare and insurance, that challenge is not optional.

Organisations usually introduce AI as a productivity tool. Automating routine tasks, summarising long documents and faster analysis are measurably driving improvements in all manner of domains. These use cases are also relatively easy to deploy. In regulated, high-stakes environments, guardrails for safe and ethical AI use also matter.

AI models alone cannot create the trust levels that high-risk and compliance-led environments need to operate them. Trust is built carefully with embedded governance and data discipline, and of course, human oversight. That level of maturity sits well beyond early experimentation or pilot programs.

At EXL, the iceberg metaphor is a useful explanatory model for AI adoption. The visible productivity gains are at the tip. They are easy to see and easy to measure. But the bulk of the iceberg, the part that determines whether AI succeeds or fails at scale, sits below the waterline.

That ‘underwater’ part of the iceberg includes operating models, controls, people, and processes. In practice, those controls include governance frameworks that monitor fairness and bias in AI systems, and safeguard sensitive data through strong privacy and security standards. They ensure AI-driven decisions remain explainable and auditable. Just as importantly, organisations must define clear accountability for how AI is deployed, monitored, and corrected when outcomes fall short of expectations.

In other words, the hidden part of the iceberg is where most of the work happens, and where most organisations underestimate the effort required.

When organisations struggle with AI, it is rarely because AI is not powerful enough. Today’s models are extraordinarily capable. The failure point is almost always operational.

Organisations sometimes treat AI as a plug-and-play upgrade. To tap into its full potential, AI is better treated as a living capability that is designed, governed, and continuously managed.

Responsible AI at scale requires a fundamentally different way of working. We see this emerging as multidisciplinary delivery teams, referred to as “pods”, that bring together the full range of expertise needed to make AI trustworthy in real-world conditions.

These teams typically include domain experts who understand the regulatory and operational realities of sectors like healthcare or insurance. Their role is critical. Without that human-led contextual knowledge, even technically accurate outputs can be misapplied, sometimes dangerously.

They also include data and database engineers (especially those working with retrieval-augmented generation) who ensure models draw from accurate and auditable data sources. Without these strong data foundations, organisations risk relying on AI systems that lack reliable data.

Prompt and model engineers are also necessary for designing and tuning AI outputs. They shape how AI handles ambiguity, guiding it with specific organisational context, and how it stays within defined boundaries.

As part of these multidisciplinary teams, quality assurance and risk specialists test AI outputs for bias, hallucination, and edge cases. In high-risk environments, these concerns are far from theoretical. They are operational realities that organisations should identify before systems go live.

Finally, solution architects and delivery leads ensure AI fits cleanly into existing workflows. AI that sits outside day-to-day operations is underutilised. What can deliver that utility value is embedded, governed AI.

This ‘pod’ model reflects the simple truth that AI is not a static tool. Data changes, model updates, and regulatory changes all make AI evolution inevitable. Without human oversight, that evolution means organisations are using AI without the necessary guardrails. Patients, regulators and policyholders may be less trusting of organisations that do not demonstrate they are using such guardrails.

In healthcare and insurance, the consequences of getting this wrong are immediate and potentially very public. Incorrect recommendations, biased decisions, or opaque reasoning can directly affect patient outcomes, creating financial risk. Once trust is lost, it is extremely difficult to recover.

These risks illustrate why speed cannot be the primary metric of AI adoption success. The next wave of AI leaders will not be those who moved fastest, but those who built the right foundations. They will be the organisations that regard trust as a design requirement from the beginning.

The profound intelligence of AI models is proven in the wide range of uses organisations have found for them. When AI fails, it is because organisations underestimate what it takes to make it scalable.

For enterprises operating in regulated, high-risk sectors, the message is clear. Productivity gains are valuable, but they are only the beginning. Real value sits beneath the surface, in the operating models, governance structures, and multidisciplinary collaboration that reduce risks. These approaches may feature less in headlines, but they are what make AI sustainable.

Organisations willing to look beyond the tip of the iceberg and invest in what lies beneath will be the ones who tap into AI’s full potential. Speed is not at the expense of quality, nor is trust sacrificed for efficiency.